Australia is currently embarking on a process to define a methodology for assessing the impact and engagement of its research. This process features two research and engagement working groups, a performance and incentives working group and a technical working group, of which Jonathan Adams, Digital Science’s Chief Scientist, is in fact a member. In light of this process, it is useful to reflect on the effect that assessment exercises have, on a research institution’s internal information management practices.

Within Australia, yearly government publication reporting exercises have partly been responsible for the sector having one of the most internationally advanced approaches to publications’ reporting. As early adopters of advanced publications management systems (such as Symplectic Elements) Australian institutions have leveraged the information that they have collected to deliver more than just government reporting. The wide command of an institution’s publications has allowed Australian universities to deploy this information for sophisticated internal reporting, as well as, to create systematic, up to date public profiles for all of their researchers. Publications reporting is now so embedded in Australian practice that reporting to government could be considered a byproduct of information a university collects for its own purposes.

Although the Australian Research Council’s (ARC) approach to measuring impact and engagement is yet to be defined and refined, it is not inconceivable that at least part of the evidenced-based information that it requires will involve some new information that needs to be collected. Following the template that publications reporting has established then, we should also ask what additional value does this information add to an institution? In what other ways could this information be incorporated into the institution? Without pre-empting what may or may not be included in the ARC’s new assessment process for impact and engagement, we can get some feel for these questions by considering the additional uses that information collected in Symplectic’s new Impact Module can provide, as well as look at the opportunities systematic reporting on altmetrics (as one measure of engagement) can provide.

Exploring the multiple uses of impact data

At the end of 2015, Symplectic introduced an Impact Module to their Elements product. This module helps researchers and administrators capture a narrative of emerging evidence of Research Impact. The evidence is recorded in a structured way, with links to research activity including grants and publications. Created at least in part, as an acknowledgment of the enormous effort the UK sector invested in retroactively creating research impact narratives, Symplectic Element’s Impact module aims to make it easier to record evidence of impact as it happens. Making impact easy to record is only one part of the equation, however, as the prospect of periodic assessment is not enough to motivate most researchers to keep their impact profile up to date. To this end, Symplectic is committed to working with the community to establish how impact narratives can be reused. Examples of reuse might include the publishing of appropriately reviewed impact summaries on public profile pages, support internal requests for stories on research, complete impact statements as part of grants reporting, and the incorporation of impact reporting into annual performance reviews.

Exploring multiple uses of engagement data

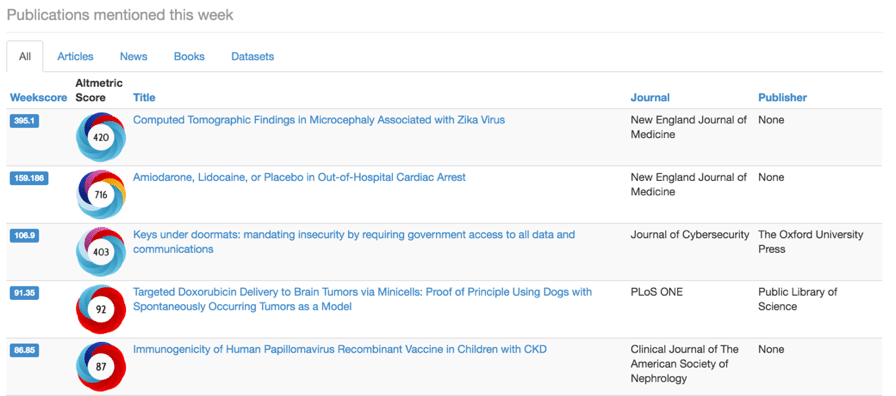

On the question of how a research institution can compare and benchmark its engagement profile, one approach might be to leverage the daily feed of attention data on publications that is available as part of the Altmetric Explorer for Institutions. Through the use of a publication disambiguation service, or integration with an up to date institutional publications collection, it is possible to create an aggregate daily attention measure that can be used to compare institutions.

Rolling Weekly Altmetric Activity Sep 15 – Mar 16

Investing time and resources in new ways to measure engagement requires justification, however, especially when the methodology is still being established. Again, by looking outside of institutional and government reporting, gaining command over an institution’s engagement profile may provide additional benefits that justify the investment. The same techniques used to profile engagement can also be used to identify research that is being talked about ‘right now.’ Armed with this information, universities have a new ability to disseminate ‘research of interest’ communications to alumni and prospective students.

A template for considering impact and engagement

Although only lightly treated in this post, these considerations provide a template for taking a holistic approach to measuring impact and engagement. In 2016, the Australian government ceased its annual publication collection. To the best of my knowledge, there isn’t an Australian institution that has used the lack of government mandate to cease university-wide publication collection, such is the value of the information. This is perhaps a key indicator of an effective evaluation exercise. When this next Impact and Engagement exercise is eventually retired, it can be hoped that it leaves the research sector similarly enhanced.