Subscribe to our newsletter

Standing on the Digits of Giants: Another Excellent Cross-Stakeholder Discussion in Scholarly Communication

Last week, I spoke at an ALPSP seminar that was jointly organized by the Digital Preservation Coalition (DPC) and ably moderated by William Kilbride, who’s the Executive Director of the DPC. Kilbride stated two of the challenges that the DPC faces in its mission to ensure preservation of the scholarly record, are difficulties in engaging with publishers and getting caught up in the Open Access (OA) debate. Specifically, he was interested in knowing how publishers think about preservation of the scholarly record. Does the industry think that the problem is solved? Does it need to be solved? Obviously, it does.

The subject of my talk was, Transformations in Scholarly Communications. That’s a pretty broad title given the storied history of the industry. I eschewed the temptation to give a history lecture and chose instead to focus on how scholarly communication is currently changing. Specifically, I talked about the growth of open science and open data, the reasons why there is currently a surge of interest, and how we might change current workflows and incentive structures to enable it. Specifically, I make the argument that data sharing workflows have to be as simple and intuitive as possible, and fully integrate things like metadata creation and structure compliance from the point at which data is created. The slides for my talk as well as the audio is available, along with all the others from the day, at the event page on alpsp.org. You can play a fun game of guess when the slide transition happened.

Like all the best events, last week’s seminar had a diverse lineup of speakers including librarians, archivists, publishers, technologists and academics. Robert Gurney, Professor of Earth Observation Science at the University of Reading gave an excellent talk on the subject of open data in climate science. With all the concentration on the behaviours of those in the life sciences, I think that the perspectives of those in the physical sciences are occasionally overlooked. Particularly, the idea that researchers don’t want to share data for fear of being scooped is a distinctly alien one to Professor Gurney, who explained how the sharing of data is inherent to the way his discipline operates.

Peter Burnhill, Director of EDINA, who I seem to only see at conferences despite the fact he happens to work five minutes walk from where I live, talked about maintaining the integrity of the scholarly record. I’ve heard that turn of phrase a lot over the past couple of years, but usually in reference to the threat of so-called predatory publishers. Burnhill didn’t talk about that, instead he pointed out that because we’re moving towards a scholarly communication system that treats data and other digital outputs as part of the legitimate scholarly record, we have to make sure that those resources are both preserved and conserved. That is to say, for data citation to be meaningful, the link to the data must point to a resource that still exists and the content of that resource cannot have changed. The popular terms for these phenomena are ‘link rot’ and ‘content drift’. Burnhill points out that by design, the web is dynamic and content changes over time. As a remedy, he made the pleasant analogy that like fish, data must be flash frozen when captured to preserve it.

Other highlights from the day included the Regional Director for Europe at ORCID, Josh Brown’s observation that it’s already possible to assign identifying metadata for researchers, institutions, grants and data to specific research projects, which we can define or specify by the DOI of the version of record.

Wendy White, Associate Director of the Hartley Library at Southampton University identified the theme of the day, which as she put it was about being in the researcher space…[to]…capture the most effective metadata. In other words, the need to integrate data sharing and preservation into researchers workflows. At the same time, both she and Sarah Callaghan, Senior Research Scientist at STFC and Editor-in-Chief of Data Science Journal reminded us that the purpose of open data is to enable it to be understandable and reusable in both the short and long term.

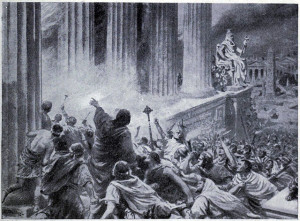

White put the challenge into context when she asked whether a future historian, a thousand years from now might be able to read and understand a digital copy of the United Nations Universal Declaration of Human Rights. Aside from technical and language considerations, there’s also cultural context. Would a person 1,000 years from now know what the UN was, or indeed what the concept of a right is? Clearly, if we’re serious about preserving the scholarly record, we’re only just beginning to tackle the challenges that are involved.