Subscribe to our newsletter

Evolved Metrics for the New Age of Research: Digital Science Webinar Summary

As part of a continuing series, we recently broadcast a Digital Science webinar on Evolved Metrics for the New Age of Research. The aim of these webinars is to provide the very latest perspectives on key topics in scholarly communication.

The webinar focused on a number of issues surrounding the assessment of research impact. Topics covered included: NIH’s new metric to evaluate funded medical and academic research – the Relative Citation Ratio (RCR); new attempts to assess research impact; the importance of research evaluation and measurement to the scientific research community and much more.

Laura Wheeler (@laurawheelers), Community Manager at Digital Science, started the webinar by giving a brief overview of the esteemed panel and their backgrounds before handing over to Steve Leicht, from ÜberResearch, who moderated and questioned the panel.

We've just started and Its a fab line up… see https://t.co/uurHV5MRYi George Santangelo, @Stew and Mike Taylor https://t.co/QP3mqcNxH7

— Simon Kerridge (@SimonRKerridge) October 13, 2016

We were delighted to welcome Dr George Santangelo, Director at the Office of Portfolio Analysis, National Institutes of Health (NIH), to start the conversation. George started with a bold statement:

“I think, at this point, there is general agreement about journal level metrics that they’re inadequate as a way of assessing anything about individual articles… No one metric is going to adequately represent the value we obtain when we make investments in biomedical research”

He then talked about the history of the NIH, stating the San Francisco Declaration on Research Assessment (DORA) as a turning point in recognizing that journal level metrics are inadequate.

George looked at the limitations of bibliometrics commonly used to measure the value of a publication or compare groups of publications:

- Publication Counts: field-dependent, use-independent

- Impact Factor: journal-level not article-level.

- Citation Rates: field- and journal-dependent

- h-index: field-dependent and time-dependent

“…everything published in journals with a high impact factor (>28), taken together, accounts for <11% of the most influential papers.”

It was explained it’s clear we are missing a lot by focusing on a small number of high profile journals with high impact factors. So, what’s the alternative? The Relative Citation Ratio (RCR)!

To find more information on RCR, see a recent paper George co-authored on Relative Citation Ratio (RCR): A New Metric That Uses Citation Rates to Measure Influence at the Article Level published on PLOS.

George made an important point about RCR values:

“RCR values are normalized, benchmarked citation rates that measure not the quality, importance, or impact of the work, but the influence of each article relative to what is expected given the scope of its scientific topic. The scientific topic is defined by an article’s co-citation network, which is a highly resolved and dynamic determination of the corresponding target audience.”

After information about RCR was first released, there was some very positive feedback!

“If you can fit the directions to download RCR values in a tweet then obviously it’s a pretty simple process to use the website – this was an important goal!”

Euan Adie from Altmetric was up next, George made sure to include the Altmetric score of his 2015 paper on RCR!

RCR has gotten a lot of attention on @altmetric #DSwebinar pic.twitter.com/HqJEEUHAHH

— Digital Science (@digitalsci) October 13, 2016

At this point, it’s worthwhile noting the steps needed to calculate the RCR:

Step 1: Use the co-citation network of each article to calculate its field citation rate.

Step 2: Benchmarking against a group of peers generates an expected citation rate.

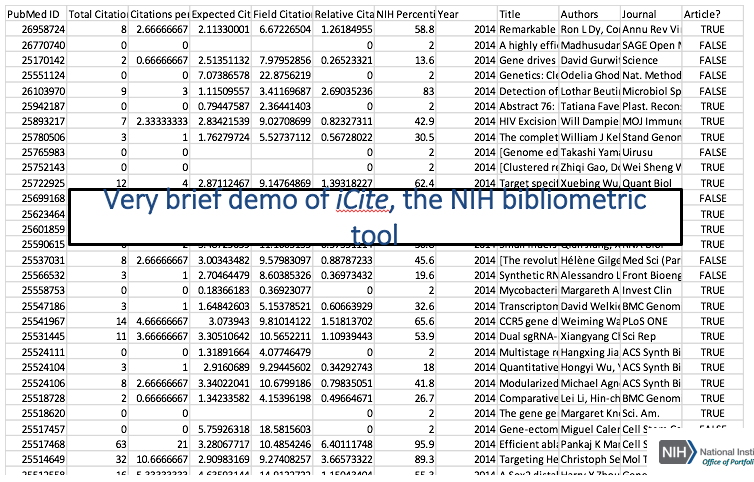

If you’re interested in viewing publicly available RCRs then have a look at this website: iCite.od.nih.gov. A snapshot of the website’s opening page is provided below:

#DSwebinar: Publicly available RCR values: the iCite bibliometric tool (https://t.co/MtKkhd5YUA) pic.twitter.com/qsX1ix9WGL

— Digital Science (@digitalsci) October 13, 2016

George finished his illuminating presentation with a demonstration of his tools in action!

“We’re extremely pleased with the usability of our tools and the positive feedback we’ve received!”

In terms of diverse metrics, George made sure to mention that he never intended for the creation of the RCR to be the end of his team’s investment in time and resources in developing metrics to assess the outputs of NIH investments. He highlighted how they are committed to developing and using diversified metrics:

“Diversified use of metrics can contribute to research assessment: using citations to track bench-to-bedside translation”

Steve Leicht thanked George for his time and expertise:

“As a member of the community, and as a general fan of the breath and evolution of research metrics, the thing that I appreciate the most in your approach is the fact that your team was very open in your process of discovery – you put out the preprint, actively reached out to the bibliometrics and research communities for ideas and recommendations, and you continue to make you work transparent and open, so thank you!”

Next up, we had Euan Adie, Founder and CEO, of Altmetric, talking about, ‘The changing metrics landscape: new outputs, new data’. Euan mentioned that he was primarily focusing on material from Altmetric, the things he’s obviously most familiar with, but that great work is being done elsewhere by Impactstory and Plum Analytics.

“It’s not just us doing it, but Altmetric.com is what I’ll be talking about today!”

Euan reminisced about his background in Bioinformatics as a software developer!

#DSwebinar @altmetric @Stew giving a few details about his history pic.twitter.com/DSi1CRP3i9

— Digital Science (@digitalsci) October 13, 2016

“Traditionally the way research is recognized is with citations, right? And they’re good at measuring scholarly use, but what if your focus is less on being cited in other journals but more on being cited in places like this…”

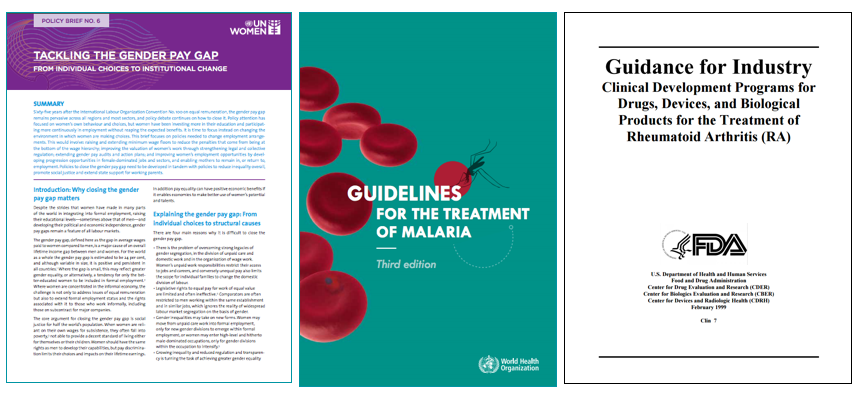

See policy documents below:

Euan made a vital point: what all these policy documents have in common with journal articles is a reference list. They cite the evidence that has gone into producing the report – the same applies to a field like education.

“Your’re influencing the next generation of researchers”

Don’t forget the social influence of your work! Think of politicians like Barack Obama citing your work to win a political debate! None of this is captured in traditional citation data.

#DSwebinar @Stew talks about the power of altmetrics over a traditional citation base pic.twitter.com/tpvtKRLogC

— Digital Science (@digitalsci) October 13, 2016

Important: we need to consider the broader impacts of the research.

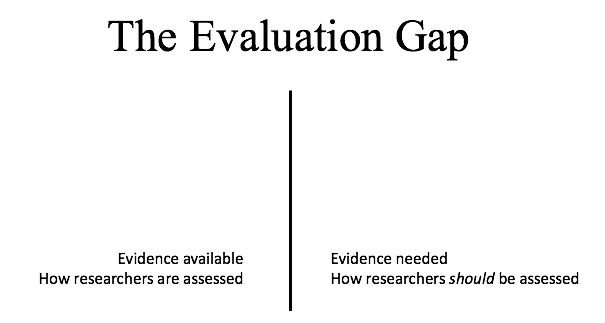

“We’re facing what has been termed ‘The evaluation gap’. On the one side, we’re saying ‘researchers should be doing great work, publishing in journals, producing data sets and writing software, and we should be recognizing the broader impact and quality of their work’ and on the other side is what’s actually available now; what tools and processes can we use to make these assessments. I’m interested in how we can fill this gap!”

"What good is quality if no one reads my paper" @Stew #DSwebinar pic.twitter.com/wrCeU9eUWl

— Digital Science (@digitalsci) October 13, 2016

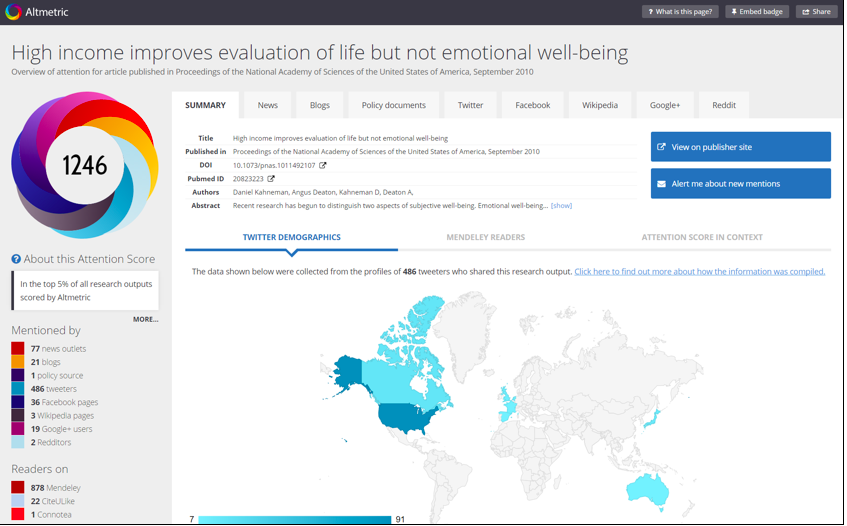

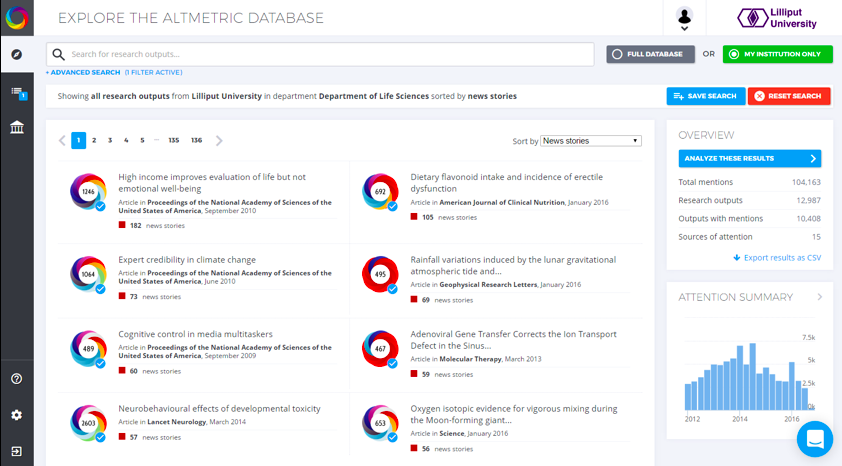

Euan then talked about how Altmetric goes about answering this question referencing the diagram below:

Euan explained that, if you were imagining a scale of 0 to 100 for each of the categories above, assuming higher numbers are better is not always appropriate. Sometimes you can get lots of attention and it’s a bad thing; for example, Andrew Wakefield’s paper suggesting a link between the MMR vaccine and autism. Conversely, a more positive example would be Obama’s paper on healthcare in The Journal of American Medical Association (JAMA), where the attention reflected close scrutiny with a positive outcome.

“Isn’t it cool that POTUS was subject to peer review!”

Altmetric gathers qualitative data to help users determine how a piece of research is being received and what broader impacts it might go on to have.

“It’s more about using data that we can collect qualitatively and quantitatively. Quality is very difficult to metricize. Altmetric works in the following way: for each scholarly output that gets produced – data sets, software, posters, books, articles – a real-time report is produced and continually updated.”

Euan goes on to mention all the unique ways in which Altmetric measures activity – snapshot above.

Altmetrics compliment citations! #DSwebinar pic.twitter.com/daVToxBSQ3

— Digital Science (@digitalsci) October 13, 2016

Speaking to the complementary nature of Altmetrics and more traditional bibliometrics, citations in particular, Euan noted:

“It’s like peanut butter and jelly, you can have one without the other but ideally you want them both together!”

Importantly, altmetrics do not just apply to journal articles, they can be used to track the engagement and attention surrounding books and other research outputs too. Euan referred to Altmetric’s recent partnership with the Open Syllabus Project:

“Essentially, integrating the Open Syllabus data lets us show the author or publisher of a book where their work is being used in academic reading lists.”

Euan wrapped up his presentation and invited everyone to try out Altmetric via their free browser bookmarklet.

“I think it’s fascinating to see how you continue to engage new pieces of information to measure out engagement and impact” Steve Leicht

Our final panelist was Mike Taylor, Head of Metrics Development, Digital Science, and he started by thanking our keen audience:

“It’s really gratifying to see so many actively involved people following our webinar today”

Mike looked at how we are going to be improving the use of RCR at Digital Science over the next few years:

“A humanities article may take as much as eight years to reach peak citation, a computer science article or a physics letter probably peaks within the first year… for those of us who work in the field, we are aware of these variations, but generally speaking people outside the field don’t understand these dramatic variations. RCR works to normailze – to iron-out – those variations, so you can compare articles from different subject areas”

The RCR gives a clear indication whether an article is performing against its peers, independent of field citation rate:

- 1.0 = as expected, > 1.0 is better

- Sophisticated normalization means you can compare articles from different subject areas

- Highly open metric – formulation, data and license

- The statistical characteristics permit robust analysis

Digital Science is making a big commitment to the RCR. RCR is being placed on Symplectic, Dimensions, Figshare, Altmetric and ReadCube.

“It’s a metric you’re going to see a lot of”

Digital Science’s platforms answer particular use cases. Our customers’ varied needs shape our metrics development programme.

“There’s no single metric that can be used for all cases! Unfortunately, we live in a time where the impact factor has dominated peoples thinking in this space. We need to be proud and assertive when saying different metrics answer different cases”

Mike went on to mention the wider context of metrics, saying that as the community moves towards open science, expectations on evaluation and metrics will change. As a consequence, more and more people, including non-specialists, will be making use of metrics and creating reports.

"Openness is a new paradigm" @herrison #DSwebinar pic.twitter.com/SBaX4xj7iX

— Digital Science (@digitalsci) October 13, 2016

RCR is a great combination: it’s easy to understand, covers an amount of normalization, builds a future pathway and is open. Altmetrics shows a way to understanding a wider context.

“With RCR, we are starting to see a breakdown of reliance on journal metrics in terms of understanding the importance of an article metric. Most normalized metrics only look at the subject areas of the article’s journal”

With RCR, an article is compared against others in its co-citation network, not those it the same journal!

“As an article accrues citations, the network increases in diversity, size and stability, and the RCR value matures – it reflects how an article is actually being used!”

Mike talked about network theory, its relevance, and his predictions for the future. Interestingly enough, Google’s search engine and its pagerank algorithm was inspired by Eugene Garfield’s work on bibliometrics! New technological developments such as cloud computing, graph databases and new mathematical techniques are opening up new fields. Mike mentioned a recent example of the application of network theory in the role of dragons in creationist myths! Scientists Trace Society’s Myths to Primordial Origins.

#DSwebinar @herrison https://t.co/63DvK0r1Ga pic.twitter.com/rOpnCtS7EE

— Digital Science (@digitalsci) October 13, 2016

Mike talked about some more fascinating work on network analyses from the 3:AM Altmetrics Conference he just attended in Romania by Lauren Cadwallader, ‘Particular patterns of altmetric behavior may indicate high probability of policy impact’.

Mike concluded his fascinating presentation with a strong message:

“I think one of the things we’re going to see over the next few years is a increasing importance in qualitative, descriptive narratives. We have to go beyond the number and recognise that researchers are humans with ambitions wanting to describe their work in a wider context… It’s very hard to do that just in numbers.”

The webinar ended with a lively Q&A debate spearheaded by Steve Leicht; great questions invoked great responses! Using #DSwebinar, our audience was able to interact with our panel throwing their opinions into the mix. An example of a question given to George can be found below:

Are there plans for the NIH to continue updating the data on icites in the long term? How is RCR being used at NIH?

“Yes, we’re committed to continuing to support the website. We’ve just added data from 2015, and the coverage includes all papers in PubMed. We’re working with other agencies in the US to expand that beyond just PubMed but, for now, it’s just all papers in PubMed which included most of the papers for NIH to be evaluating. We don’t use these values in funding decisions, we use them to track the progress of science – to track and compare fields; to establish new funding mechanisms. It allows us to look in the rear view and establish a specific area or hurdle that needs to be overcome or focus on a particular area of research like microbiome research… That’s how we will use this kind of metric. It’s worth pointing out, this does not get at quality, or the importance of the work, or the impact of the work using citation data; it does, however, tell us the influence. A word I like, because it covers a lot of the debate about the use of citations. One must, however, take influence with a grain of salt and not use it as a proxy for quality, which has been done mistakenly in the past in regards to impact-factor.”

If you feel you still have something to say – we’re all ears! Tweet us @digitalsci using #DSwebinar.