Understanding and addressing the trust gap in modern research

Trust. Five letters, multiple meanings, immense power. Trust arrives on foot and leaves on horseback.1 Trust is the basis for society, but foundations are fracturing in a world of growing divides.

Trust in research has never been more important in our lifetimes.

In the vast subway system of the scientific world, we must navigate through research integrity. All who create or consume science are on this journey. How do we safely traverse information and hold on to the sanctity of science. How do we mind the trust gap?

Featured topics

In this TL;DR theme we explore important issues surrounding trust through our blogs, podcasts, social media posts and the events Digital Science will be attending:

- Perception: What does trust in research look like? How can trust in research be a positive force?

- Identity: Is AI a force for good in research? Who (and what) can we trust in an AI future? What are the impacts on universities globally?

- Landscape: What are Trust Markers in a research setting? What are the risks and benefits of using Trust Markers?

- Communications: How do trust and peer review fit together in scholarly communications? How do we translate trust from scholarship to society?

- Networks: Is the breakdown of trust becoming a barrier to collaboration and progress? What role do geopolitical forces play? Is there fragmentation in research that is affecting our trust in processes and publications?

Thought-provoking articles

We’ve curated articles from our in-house experts as well as those in our community to get under the skin of trust in research and what we can all do to safeguard future research integrity.

A conflict of interests – Manipulating peer review or research as usual?

When are commonly held interests too overlapping for peer reviewers? Examining a case of undeclared conflicts of interest.

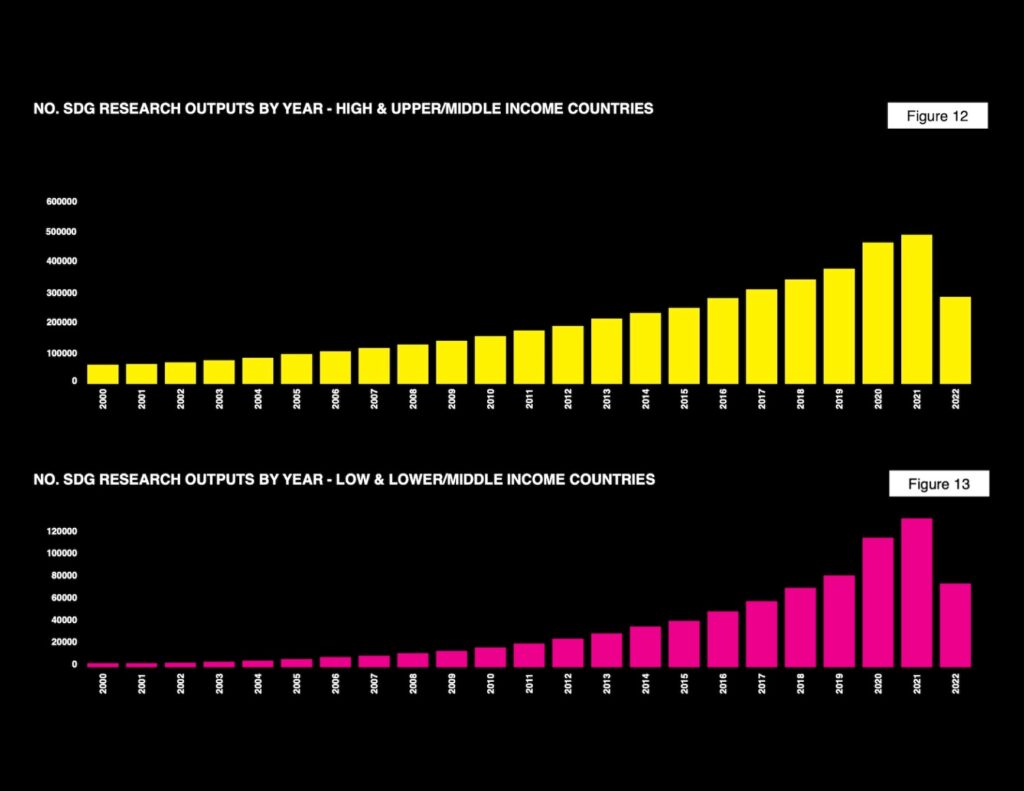

A new white paper on the UN SDGs shows more can be done to raise up funding and research recognition for the developing world.

Zooming in on zoonotic diseases

An analysis has revealed disparities in the research effort to combat the growing risk of animal-borne diseases amid climate change.

Reproducibility and research integrity top UK research agenda

Digital Science reflections on The House of Commons Science, Innovation and Technology Committee report on Reproducibility and Research Integrity.

The subtle biases of LLM training are difficult to detect but can manifest themselves in unexpected places. Digital Science CEO Daniel Hook calls this the ‘Lone Banana Problem’ of AI.

A different perspective on responsible AI

How a school science fair inspired a passion for science communication, a PhD in microbiology, and a valuable perspective on the current AI debate.

Building trust in research

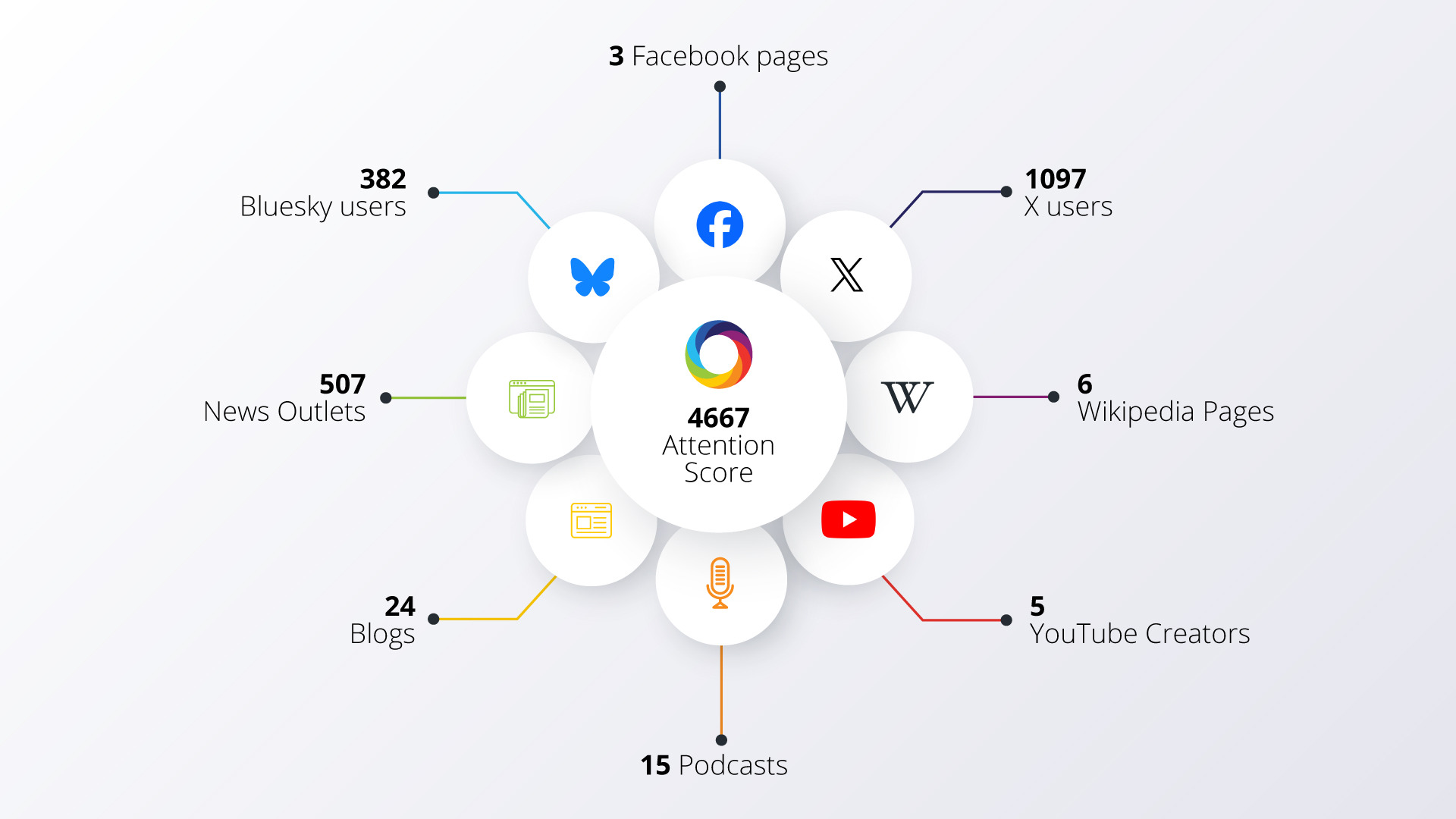

At Digital Science our tools and services are used on a daily basis by millions of researchers and students worldwide. Trust and responsibility to our user community has always been at the core of what we do, and as technology continues to evolve we recognize our role to play in helping to build global trust in research.

We’ve been supporting this since our founding in 2010, with a specific focus in recent years on building practical applications to help, including the investment in and development of the world’s leading tools for building trust in research:

- 2020-2023: Supporting and integrating Ripeta — the world’s leading tool for detecting key indicators of trust — into Digital Science

- February 2023: Dr Leslie McIntosh appointed as first VP Research Integrity at Digital Science

- June 2023: Launch of Dimensions Research Integrity — providing data-driven insights from the world’s largest research integrity dataset

Throughout 2023 we will have a special focus on showcasing the people across Digital Science whose work has a particular relevance to trust in the community. We’ll add those interviews and insights to this post as we publish them.

You also can find us at events & webinars throughout the year, and if you’d like to know more please get in touch ✉

Footnotes

1. from the Dutch saying Vertrouwen komt te voet en gaat te paard.